Multi-Stage Dockerfile for Clojureλ︎

Building a Clojure project from source to create a docker image provides an effective workflow for local testing and system integration.

A multi-stage Dockerfile uses a separate build stage that separates tools and temporary artefacts from the final Docker image, producing a runtime image with minimal size and resources used.

Practicalli Project Templates provide a Multi-stage Dockerfile

Dockerfile, .dockerignore and compose.yaml configurations to optimise the build and run of Clojure projects.

Practicalli Clojure CLI Config which provides aliases for running community tools to support a wide range of development tasks.

Practicalli Clojure CLI Config which provides aliases for running community tools to support a wide range of development tasks.

Java 21 Long Term Support release recommended

This guide uses Docker images with Java 21, the latest Long Term Support release (LTS) as of October 2023.

Examples will be provided using Alpine Linux and Debian Linux operating system.

Builder stageλ︎

The builder stage packages the Clojure service into an uberjar containing the Clojure standard library and the build of the Clojure service.

Practicalli uses the Official Clojure Docker image with Clojure CLI as the build environment.

Clojure Docker image uses OpenJDK Eclipse Temuring image

OpenJDK Eclipse Temurin is used as the base for all Clojure Official docker images.

Practicalli recommends using the same OpenJDK Long Term Support image tag and Operating system for the Clojure and Eclipse Termurin images.

Alpine Linux image variants are used to keep the image file size as small as possible, reducing local resource requirements (and image download time).

Set builder stage image using specific Java version

clojure:temurin-21-tools-deps-alpine image details

CLOJURE_VERSION will over-ride the version of Clojure CLI in the Clojure image (which defaults to latest Clojure CLI release). Or choose an image that has a specific Clojure CLI version, e.g. temurin-21-tools-deps-1.11.1.1413-alpine

Debian Linux bookworm is the latest stable image, using slim variant for a minimal set of packages

Set builder stage image using specific Java version

clojure:temurin-21-tools-deps-alpine image details

CLOJURE_VERSION will over-ride the version of Clojure CLI in the Clojure image (which defaults to latest Clojure CLI release). Or choose an image that has a specific Clojure CLI version, e.g. temurin-17-tools-deps-1.11.1.1182-alpine

Clojure CLI release history

Clojure.org Tool Releases page shows the current release and history of each released version.

Create directory for building the project code and set it as the working directory within the Docker container to give RUN commands a path to execute from.

Clojure CLI and tools.build

Practicalli Clojure - Tools.Build is the recommended approach to creating an Uberjar from a Clojure CLI project.

As long as the required files are copied, any build tool and task can be used to create the Uberjar file that packages the Clojure service for deployment.

Cache Dependenciesλ︎

Clojure CLI is used to download library dependencies for the project and any other tooling used during the build stage, e.g. test runners, packaging tools to create an uberjar.

Once a library has been downloaded it becomes part of the cached layer so the download should only occur once.

If the deps.edn file changes then the layer will run again and update the cache if changes to the library dependencies were made.

Practicalli Makefile includes a deps task that downloads all the library dependency for a project.

The deps Makefile target uses the clojure -P command to download the dependencies without running any other Clojure code or tools.

Makefile deps target using Clojure CLI

Copy the essential build configuration files:

Makefilewhich defines thedepstargetdeps.ednfile which includes the project dependencies and a:project/buildalias that includes the tools.build library.build.cljscript to define built tasks usingtools.build

The dependencies are cached in the Docker overlay (layer) and this cache will be used on successive docker builds unless the deps.edn file or Makefile is change.

Copy the deps.edn file to the build stage and use the clojure -P prepare (dry run) command to download the dependencies without running any Clojure code or tools.

The dependencies are cached in the Docker overlay (layer) and this cache will be used on successive docker builds unless the deps.edn file is change.

Pull request welcome

deps.edn in this example contains the project dependencies and :build alias used build the Uberjar.

Build Uberjarλ︎

Copy all the project files to the docker builder working directory, creating another overlay.

Copying the src and other files in a separate overlay to the deps.edn file ensures that changes to the Clojure code or configuration files does not trigger downloading of the dependencies again.

Run the dist task to generate an Uberjar for distribution.

Run the tools.build command to generate an Uberjar.

Use tools.build to generate an Uberjar

:project/build is an alias to include Clojure tools.build dependencies which is used to build the Clojure project into an Uberjar.

Pull request welcome

Docker Ignore patternsλ︎

.dockerignore file in the root of the project defines file and directory patterns that Docker will ignore with the COPY command. Use .dockerignore to avoid copying files that are not required for the build

Keep the .dockerignore file simple by excluding all files with * pattern and then use the ! character to explicitly add files and directories that should be copied

Pull request welcome

test-data directory is commonly used by Practicalli to include scripts and data for testing the system once running.

The classic approach for Docker ignoer patters is to specify all files and directories to exclude in a Clojure project, although this can lead to more maintenance as the project grows.

Run-time stageλ︎

The Alpine Linux version of the Eclipse Temurin image is used as it is around 5Mb in size, compared to 60Mb or more of other operating system images.

Run-time containers are often cached in a repository, e.g. AWS Container Repository (ECR). LABEL adds metadata to the container helping it to be identified in a repository or in a local development environment.

LABEL org.opencontainers.image.authors="nospam+dockerfile@practicall.li"

LABEL io.github.practicalli.service="Gameboard API Service"

LABEL io.github.practicalli.team="Practicalli Engineering Team"

LABEL version="1.0"

LABEL description="Gameboard API service"

Use

docker inspectto view the metadata

Additional Packagesλ︎

Optionally, add packages to support running the service or helping to debug issue in the container when it is running. For example, add dumb-init to manage processes, curl and jq binaries for manual running of system integration testing scripts for API testing.

apk is the package tool for Alpine Linux and --no-cache option ensures the install file is not part of the resulting image, saving resources. Alpine Linux recommends setting versions to use any point release with the ~= approximately equal version, so any same major.minor version of the package can be used.

Check Alpine packages if new major versions are no longer available (low frequency)

apt is the Advanced Package Tool for Debian Linux and any Debian based distrobution like Ubuntu.

Add Alpine packages

Debian Linux packages if new major versions are no longer available (low frequency)

Non-root group and userλ︎

Docker runs as root user by default and if a container is compromised the root permissions and could lead to a compromised host system. Docker recommends creating a user and group in the run-time image to run the service

Create a non-privileged user account

Create directory to contain service

Create directory to contain service archive, owned by non-root user

Copy Uberjar to run-time stageλ︎

Create a directory to run the service or use a known existing path that will not clash with any existing files from the image.

Set the working directory and copy the uberjar archive file from Builder image

Copy Uberjar from build stage

Optionally, add system integration testing scripts to the run-time stage for testing from within the docker container.

RUN mkdir -p /service/test-scripts

COPY --from=builder --chown=clojure:clojure /build/test-scripts/curl--* /service/test-scripts/

Service Environment variablesλ︎

Define values for environment variables should they be required (usually for debugging), ensuring no sensitive values are used. Environment variables are typically set by the service provisioning the containers (AWS ECS / Kubernettes) or on the local OS host during development (Docker Desktop).

# optional over-rides for Integrant configuration

# ENV HTTP_SERVER_PORT=

# ENV MYSQL_DATABASE=

ENV SERVICE_PROFILE=prod

Java Virtual Machine Optimisationλ︎

Clojure Uberjar runs on the Java Virtual Machine which is a highly optimised environment that rarely needs adjusting, unless there are noticeable performance or resource issue use.

Minimum and maximum heap sizes, i.e. -XX:MinRAMPercentage and -XX:MaxRAMPercentage are typically the only optimisations required.

java -XshowSettings -version displays VM settings (vm), Property settings (property), Locale settings (locale), Operating System Metrics (system) and the version of the JVM used. Add the category name to show only a specific group of settings, e.g. java -XshowSettings:system -version.

JDK_JAVA_OPTIONS can be used to tailor the operation of the Java Virtual Machine, although the benefits and constraints of options should be well understood before using them (especially in production).

Show settings on JVM startup

Show system settings on startup, force container mode and set memory heap maximum to 85% of host memory size.

Use relative heap memory settings, not fixed sizes

Relative heap memory settings (-XX:MaxRAMPercentage) should be used for containers rather than the fixed value options (-Xmx) as the provisioning service for the container may control and change the resources available to a container on deployment (especially a Kubernettes system).

Options that are most relevant to running Clojure & Java Virtual Machine in a container include:

-XshowSettings:systemdisplay (container) system resources on JVM startup-XX:InitialRAMPercentagePercentage of real memory used for initial heap size-XX:MaxRAMPercentageMaximum percentage of real memory used for maximum heap size-XX:MinRAMPercentageMinimum percentage of real memory used for maximum heap size on systems with small physical memory-XX:ActiveProcessorCountspecifies the number of CPU cores the JVM should use regardless of container detection heuristics-XX:±UseContainerSupportforce JVM to run in container mode, disabling container detection (only useful if JVM not detecting container environment)-XX:+UseZGClow latency Z Garbage collector (read the Z Garbage collector documentation and understand the trade-offs before use) - the default Hotspot garbage collector is the most effective choice for most services

Only optimise if performance test data shows issues

Without performance testing of a specific Clojure service and analysis of the results, let the JVM use its own heuristics to determine the most optimum strategies it should use

Expose Clojure serviceλ︎

If Clojure service listens to network requests when running, then the port it is listening on should be exposed so the world outside the container can communicate to the Clojure service.

e.g. expose port of HTTP Server that runs the Clojure service

Entrypointλ︎

Finally define how to run the Clojure service in the container. The java command is used with the -jar option to run the Clojure service from the Uberjar archive.

The java command will use arguments defined in JDK_JAVA_OPTIONS.

ENTRYPOINT directive defines the command to run the service

Docker ENTRYPOINT directive

ENTRYPOINT is the recommended way to run a service in Docker. CMD can be used to pass additional arguments to the ENTRYPOINT command, or used instead of ENTRYPOINT.

jshellis the default ENTRYPOINT for the Eclipse Temurin image. jshell will run if a CMD directive is not included in the run-time stage of the Dockerfile.

The ENTRYPOINT command runs as process id 1 (PID 1) inside the docker container. In a Linux system PID 1 should respond to all TERM and SIGTERM signals.

dump-init provides a simple process supervisor and init system, designed to run as PID 1 and manage all signals and child processes effectively.

Use dumb-init as the ENTRYPOINT command and CMD to pass the java command to start the Clojure service as an argument. dumb-init ensures TERM signals are sent to the Java process and all child processes are cleaned up on shutdown.

ENTRYPOINT to cleanly manage service

Alternatively, run dumb-jump and java within the

ENTRYPOINTdirective,ENTRYPOINT ["/usr/bin/dumb-init", "--", "java", "-jar", "/service/practicalli-service.jar"]. This approach cannot be overridden with an additional CMD directive on the command line when usingdocker run.

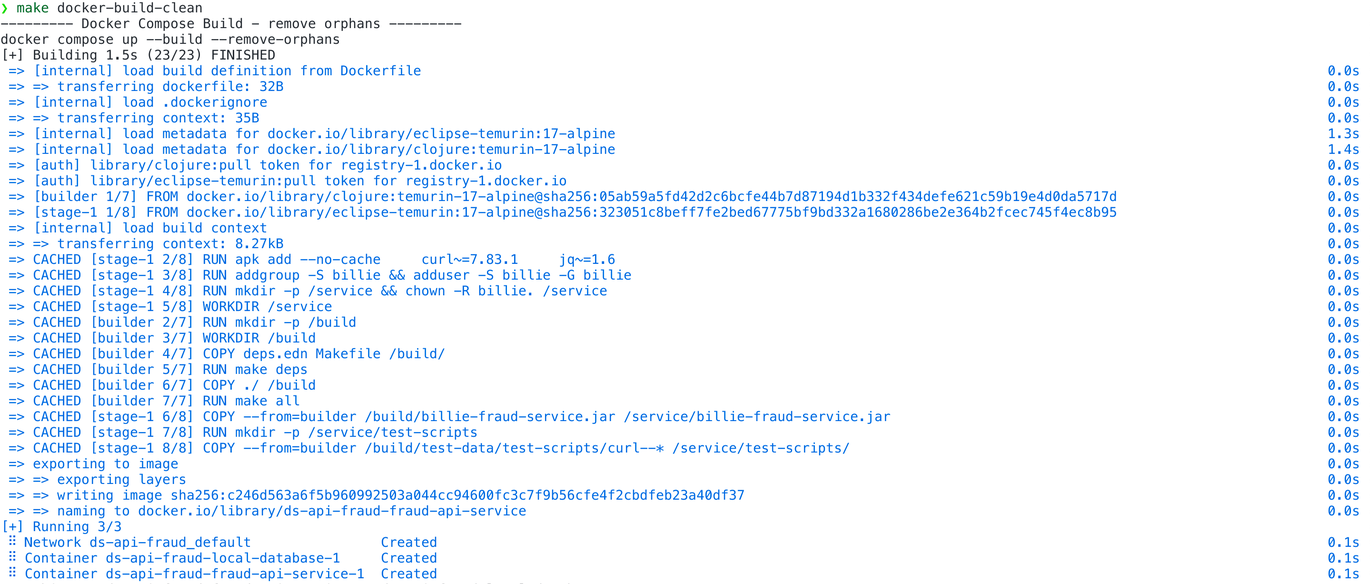

Build and Runλ︎

Ensure docker services are running, i.e. start docker desktop.

Build the service and create an image to run the Clojure service in a container with docker build. Use a --tag to help identify the image and specify the context (in this example the root directory of the current project, .)

After the first time building the docker image, any parts of the build that havent changed will use their respective cached layers in the builder stage. This can lead to very fast, even zero time builds.

Maximise use of Docker cache

Maximising the docker cache by careful consideration of command order and design in a Dockerfile can have a significant positive affect on build speed.

Each command is an overlay (layer) in the Docker image and if its respective files have not changed, then the cached version of the command will be used.

Run the built image in a docker container using docker run, publishing the port number so it can be used from the host (developer environment or deployed environment). Use the name of the image created by the tag in the docker build command.

Orchestrate multiple services with Compose

Create a compose.yml file to defines all services to run to support local integration testing, optionally adding health checks and conditional starts.

Run docker compose up to start all the services.